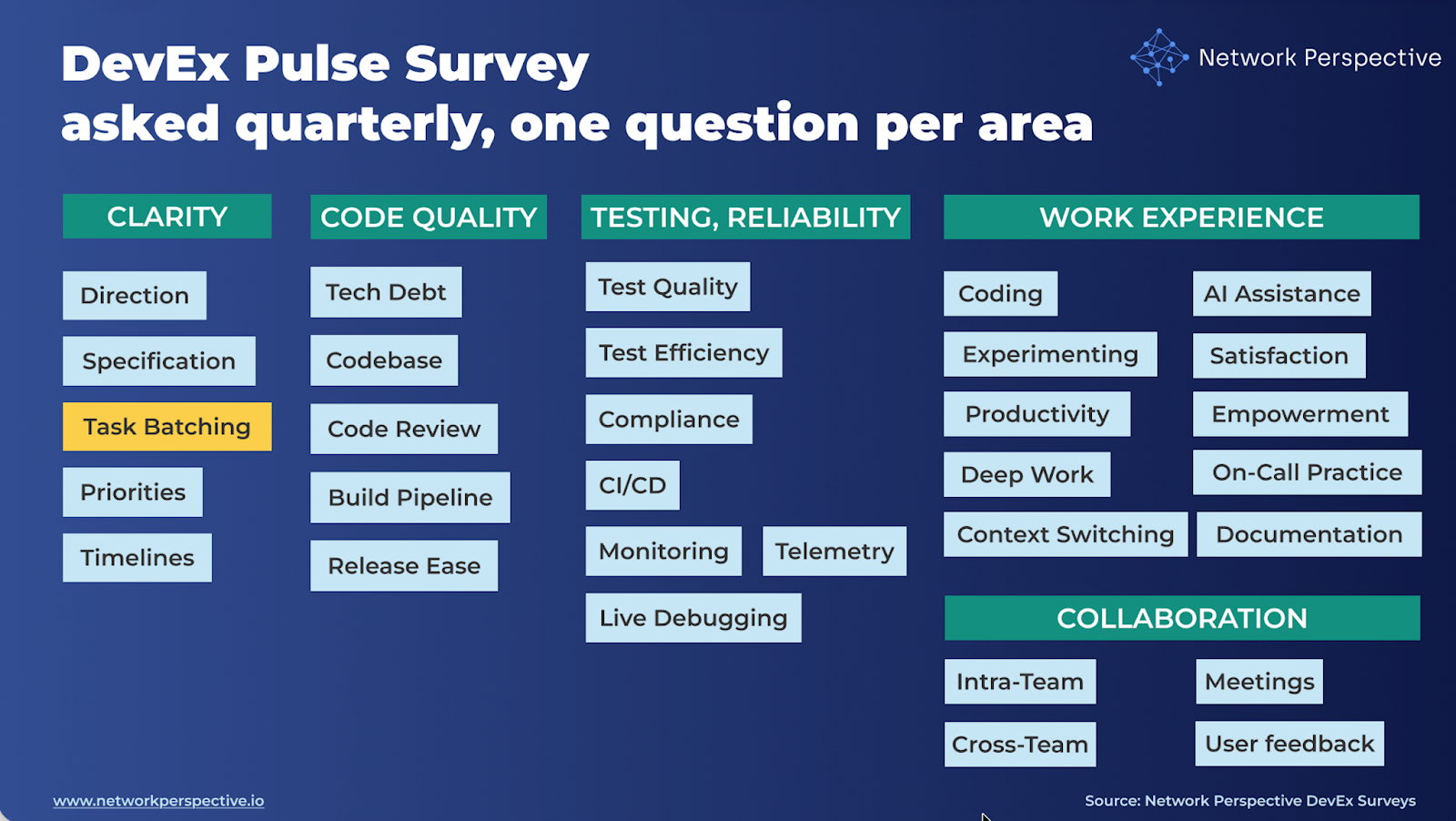

In our DevEx AI tool, we use two sets of survey questions: DevEx Pulse (one question per area to track overall delivery performance) and DevEx Deep Dive (a focused root-cause diagnostic when something needs attention).

DevEx Pulse tells us where friction is. DevEx Deep Dive tells us why it exists.

Let’s take a closer look at task batching. If the Pulse question “Our tasks are well-sized for efficient work” receives low scores and developers’ comments reveal significant friction and blockers, what should you do next?

Here are 14 deep dive questions you can ask your developers to uncover the causes of friction in task batching, along with guidance on how to interpret the results, common patterns engineering teams encounter, and practical first steps for improvement. This will help you pinpoint what’s causing the problem and fix it on your own, or move faster with our DevEx AI tool and expert guidance.

The real question is: Whether tasks are easy to start, work on, and finish — or grow, get blocked, and spill over.

Deep dive questions should help you map how task batching flows through your delivery process and identify where it breaks down:

Clarity → Size → Independence → Flow → Delivery → Intent → Cost

Here’s how the DevEx AI tool helps uncover this.

Are tasks a good size to work on?

Is work clear enough when it starts?

Can work move forward without waiting on others?

Can tasks be finished smoothly once started?

Are tasks easy to review and ship?

Why are tasks sized the way they are?

What’s missing or not working well for you here?

Are tasks easy to start, work on, and finish — or do they grow, get blocked, or spill over?

Here’s how the DevEx AI tool helps make sense of the results.

Questions

What this section tests

Whether tasks are small and focused enough to work on comfortably.

How to read scores

Key insight

When tasks feel heavy, it’s usually because scope and focus are mixed together.

Open-ended comments

Prompt: “What could be better here?”

How to read responses

Key insight

Task size complaints usually point to how work is grouped, not effort levels.

Questions

What this section tests

Whether work is ready to be broken down before tasks are created.

How to read scores

Key insight

Poor batching often starts upstream, before tasks even exist.

Open-ended comments

How to read responses

Key insight

Hidden work is a sign of starting too early, not bad estimation.

Questions

What this section tests

Whether tasks are sliced to reduce waiting and coordination.

How to read scores

Key insight

Large tasks are often a workaround for dependency pain.

Open-ended comments

How to read responses

Key insight

When work waits, tasks grow to make waiting “worth it”.

Questions

What this section tests

Whether tasks fit human focus and time limits.

How to read scores

Key insight

Tasks that don’t fit kill momentum and increase cognitive load.

Open-ended comments

How to read responses

Key insight

Flow problems show up as slow progress, not complaints.

Questions

What this section tests

Whether tasks are sized for fast feedback and safe delivery.

How to read scores

Key insight

Delivery pain is often where batching problems become visible first.

Open-ended comments

How to read responses

Key insight

Big tasks hide risk until it’s expensive to fix.

Questions

What this section tests

Whether batching decisions are deliberate or pressure-driven.

How to read scores

Key insight

Task size reflects what the organization optimizes for.

Open-ended comments

How to read responses

Key insight

When pressure rises, tasks inflate.

How common: Often

Pattern:

Size ↓ | Flow ↓ | Delivery ↓

Interpretation

Tasks exceed human and system flow limits.

How common: Common

Pattern:

Readiness ↓ | Known ↓ | Flow ↓

Interpretation

Tasks are created before clarity and decisions exist.

How common: Medium

Pattern:

Dependencies ↓ | Unblocked ↓ | Pressure ↓

Interpretation

Tasks grow to survive waiting and coordination cost.

How common: High in delivery-driven teams

Pattern:

Pressure ↓ | Size ↓ | Fits ↓

Interpretation

Task size is optimized for commitments, not flow.

Work looks ready but isn’t.

Dependencies are social, not technical.

Tasks start strong but are too large.

Good intent overridden by deadlines.

Contradictions show where the system forces people to compensate.

What NOT to say

What TO say (use this framing)

“Task size reflects how ready and unblocked the work is — not developer skill.”

“Large tasks are usually a signal of pressure, dependencies, or unclear work.”

Show only three things:

Here’s how the DevEx AI tool will guide you toward making first actions.

Signal: Tasks are too big or mixed.

First steps

Small operational change - add a simple planning check: “If this task takes more than 2–3 days or touches multiple systems, split it.”

Signal: Tasks start before work is understood.

First steps

Small operational change - add a lightweight “Ready to Start” checklist:

Signal: Tasks wait on others.

First steps

Example: “Does this require another team or system change?”

Small operational change - add dependency mapping during task creation: “Who else might this affect?”

Signal: Tasks start but stall or spill over.

First steps

Small operational change - add a daily question in stand-ups: “Is this task still finishable?”

Signal: Tasks are too big to review or ship.

First steps

Small operational change - adopt a rule: “If a PR feels hard to review, the task was too large.”

Signal: Tasks are sized to satisfy deadlines.

First steps

Small operational change - introduce “smallest useful step” planning before task creation.

(Size ↓ + Flow ↓ + Delivery ↓)

First step

Introduce smaller vertical slices:

Instead of: “Build feature X”, create tasks like:

Goal: deliver value in small increments.

(Readiness ↓ + Known ↓ + Flow ↓)

First step

Add a 10-minute clarification step before task creation. Ask:

This reduces hidden work dramatically.

(Dependencies ↓ + Unblocked ↓)

First step

Make dependencies visible early.

Simple practice: Every task lists who or what it depends on. Teams can then reorder work before starting.

(Pressure ↓ + Size ↓ + Fits ↓)

First step

Change the planning language from: “Finish this feature” to: “Deliver the smallest working step toward this goal.” This alone often shrinks tasks by 2–3×.

Contradictions reveal system tension.

Work looks clear but hides complexity.

First step

Require tasks to include: “What could surprise us?”. This exposes hidden unknowns early.

Dependencies exist but are informal.

First step

Add one question at task creation: “Who might we need to coordinate with?”

Tasks start well but grow too large.

First step

Introduce mid-task splitting. If a task grows:

Teams want good batching but deadlines override.

First step

Introduce “smallest releasable step” planning. Before committing, ask: “What is the smallest version we could ship?”

Improve task size by fixing the system before the task. Large tasks rarely come from developer behavior. They usually come from:

Fix those first.

Introduce a “Smallest Step First” planning habit.mBefore creating tasks, ask: “What is the smallest useful change we can deliver next?” This single change improves:

all at once.

What you’ve seen here is only a small part of what the DevEx AI platform can do to improve delivery speed, quality, and ease.

If your organization struggles with fragmented metrics, unclear signals across teams, or the frustrating feeling of seeing problems without knowing what to fix, DevEx AI may be exactly what you need. Many engineering organizations operate with disconnected dashboards, conflicting interpretations of performance, and weak feedback loops — which leads to effort spent in the wrong places while real bottlenecks remain untouched.

DevEx AI brings these scattered signals into one coherent view of delivery. It focuses on the inputs that shape performance — how teams work, where friction accumulates, and what slows or accelerates progress — and translates them into clear priorities for action. You gain comparable insights across teams and tech stacks, root-cause visibility grounded in real developer experience, and guidance on where improvement efforts will have the highest impact.

At its core, DevEx AI combines targeted developer surveys with behavioral data to expose hidden friction in the delivery process. AI transforms developers’ free-text comments — often a goldmine of operational truth — into structured insights: recurring problems, root causes, and concrete actions tailored to your environment.

The platform detects patterns across teams, benchmarks results internally and against comparable organizations, and provides context-aware recommendations rather than generic best practices.

Progress on these input factors is tracked over time, enabling teams to verify that changes in ways of working are actually taking hold, while leaders maintain visibility without micromanagement. Expert guidance supports interpretation, prioritization, and the translation of insights into measurable improvements.

To understand whether these changes truly improve delivery outcomes, DevEx AI also measures DORA metrics — Deployment Frequency, Lead Time for Changes, Change Failure Rate, and Mean Time to Recovery — derived directly from repository and delivery data. These output indicators show how software performs in production and whether improvements to developer experience translate into faster, safer releases.

By combining input metrics (how work happens) with output metrics (what results are achieved), the platform creates a closed feedback loop that connects actions to outcomes, helping organizations learn what actually drives better delivery and where further improvement is needed.

Returning to our topic — task batching — you can explore proven practices grounded in hundreds of interviews our team has conducted with engineering leaders.